ScaleOps Raises $130M to Fix the AI Cloud Tax📷 Published: Apr 20, 2026 at 02:21 UTC

- ★Real-time GPU infrastructure automation

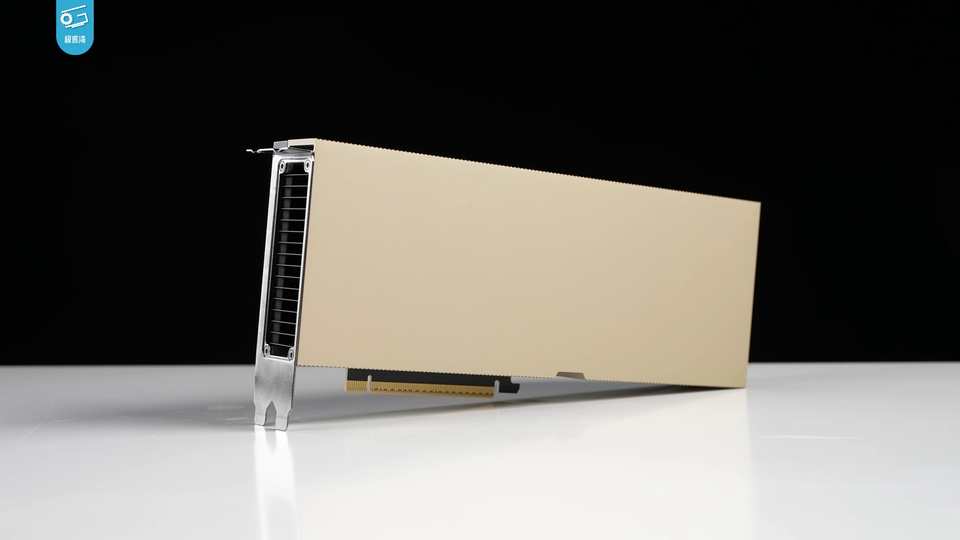

- ★Mitigating acute H100 supply shortages

- ★Reducing wasted cloud compute spend

The current AI gold rush has a dirty secret: most companies are paying for compute they aren't actually using. While the world fights over NVIDIA H100 allocations, the real bottleneck is often inefficient orchestration that leaves expensive GPUs idling or throttled.

ScaleOps has secured $130 million to tackle this gap by automating infrastructure in real time. According to TechCrunch, the goal is to eliminate the manual guesswork involved in scaling AI workloads, which currently drains budgets and slows deployment cycles.

This isn't about building a better model, but about building a better pipe for the models we already have. It is a pragmatic play in a market otherwise obsessed with parameter counts and emergent capabilities.

Infrastructure automation vs. the GPU shortage📷 Published: Apr 20, 2026 at 02:21 UTC

Infrastructure automation vs. the GPU shortage

Early signals suggest the technology focuses on dynamic resource allocation to optimize GPU utilization. If confirmed, this approach could allow enterprises to squeeze more performance out of existing clusters without waiting for new hardware shipments that are perpetually delayed.

There is speculation that ScaleOps is specifically targeting heavy enterprise workloads where inference and training bottlenecks are most acute. For the developer community, this is a welcome shift toward operational sanity over raw scale.

However, the real test remains whether automated orchestration can actually outperform a dedicated DevOps team in a high-stakes production environment. Moving from static provisioning to real-time automation is a steep climb that requires deep trust in the underlying logic. The industry is betting that efficiency is the only way to sustain the current AI spend trajectory.

The big question remains whether ScaleOps' automation can handle the volatility of diverse LLM workloads without causing systemic instability. Can an algorithm truly replace the intuition of a senior infrastructure engineer during a peak load event?