Claude Code’s Auto Mode: Safety Theater or Real Progress?

Claude Code’s Auto Mode: Safety Theater or Real Progress?📷 Published: Apr 14, 2026 at 02:21 UTC

- ★Anthropic’s binary safety tradeoff gets a middle option

- ★Developers’ frustration with all-or-nothing checks addressed

- ★Auto Mode’s real test: contextual judgment vs. false confidence

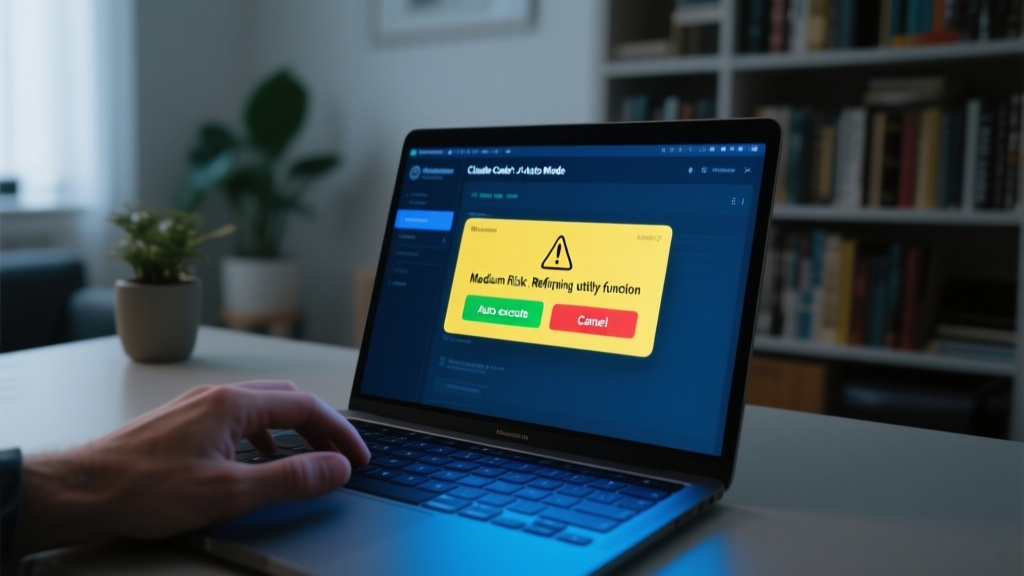

Anthropic’s Claude Code has long forced developers into an unenviable choice: babysit every line of AI-generated code like a paranoid intern, or flip the safety switch to off and pray. The new Auto Mode promises to split the difference—automating low-risk actions while flagging the rest. It’s a classic case of safety theater versus genuine improvement, and the proof will be in whether the system’s contextual judgment holds up under real-world pressure.

The move addresses a documented pain point among developers, who’ve grumbled about the clumsy binary of manual approvals or disabled protections. Early signals suggest Auto Mode uses dynamic analysis to categorize actions—think ‘rename a variable’ (auto-approved) versus ‘execute a shell command’ (flagged). But as any engineer who’s debugged a ‘smart’ assistant knows, the devil is in the edge cases.

This isn’t Anthropic’s first attempt to thread the needle between speed and caution. The company’s previous iterations of Claude Code leaned heavily on manual oversight, a choice that won praise from security-conscious teams but frustrated those chasing velocity. The question now: Is Auto Mode a refined safety net, or just a way to make developers feel less reckless while shipping faster?

The gap between ‘fully manual’ and ‘no guardrails’ just got narrower—if it works📷 Published: Apr 14, 2026 at 02:21 UTC

The gap between ‘fully manual’ and ‘no guardrails’ just got narrower—if it works

The competitive subtext here is impossible to ignore. GitHub Copilot and Amazon Q already offer varying degrees of automated execution, but Anthropic’s pitch hinges on nuanced risk assessment—a claim that’ll live or die by its false-positive rate. If Auto Mode constantly second-guesses harmless operations, it’ll join the graveyard of ‘smart’ tools that created more work than they saved. If it’s too permissive, we’re back to square one: AI assistants as fancy autocomplete.

Developer forums are cautiously optimistic, with some noting the feature’s potential to reduce ‘approval fatigue’ in large projects. Others, though, point out that the real bottleneck isn’t approval speed—it’s whether the AI’s risk model aligns with their threat model. A startup moving fast might tolerate different tradeoffs than a fintech team.

The broader industry implication? This is Anthropic’s bid to own the ‘responsible speed’ niche, a positioning that could appeal to enterprises allergic to both GitHub’s ‘move fast’ ethos and open-source tools’ lack of guardrails. But until we see real-world failure rates—not just demo-day success stories—the jury’s out on whether Auto Mode is a feature or a fig leaf.

In other words, we’ve seen this movie before: an AI tool that promises to ‘just work’ until it doesn’t. The real innovation here isn’t the mode itself, but whether Anthropic can resist the urge to call every incremental tweak a breakthrough.