AMD’s 2026 data center push: specs vs. real-world muscle

AMD’s 2026 data center push: specs vs. real-world muscle📷 Published: Apr 16, 2026 at 06:19 UTC

- ★Helios platform targets AI workloads

- ★Zen 6 may outpace Intel’s Granite Rapids

- ★CDNA architecture underpins MI500 GPUs

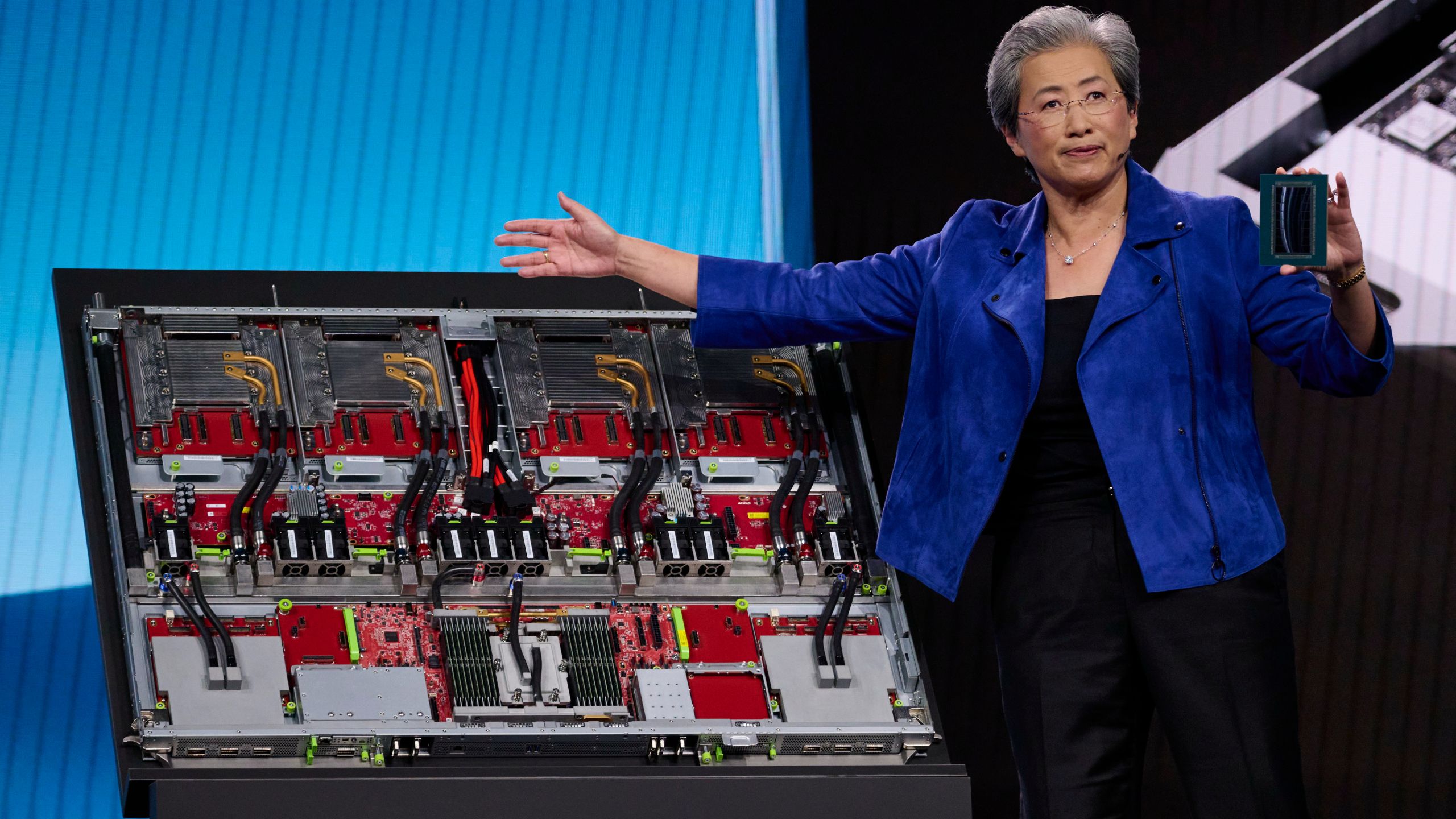

AMD’s 2026–2027 data center roadmap isn’t just a slide deck—it’s a direct challenge to NVIDIA’s AI accelerator dominance and Intel’s CPU stronghold. The Helios platform and Instinct MI500 GPUs aim to carve out a slice of the $40B+ AI hardware market, where NVIDIA’s H100 and B100 currently dictate terms. For enterprise IT teams, this means potential cost savings—AMD’s GPUs have historically undercut NVIDIA’s pricing by 20–30%—but only if performance keeps pace.

The Zen 6 architecture is the wildcard. Early benchmarks suggest it could deliver a 15–20% IPC uplift over Zen 4, which would put it ahead of Intel’s Granite Rapids in raw throughput. But IPC isn’t everything. Intel’s oneAPI and NVIDIA’s CUDA ecosystems still lock in developers, and AMD’s ROCm software stack remains a work in progress. For now, the real battle isn’t just about teraflops—it’s about who can make those teraflops usable at scale.

Then there’s the CDNA architecture, which underpins the MI500 GPUs. If AMD can deliver on its promise of 2x the AI training performance per watt, it could force NVIDIA to rethink its pricing. But enterprise buyers are notoriously risk-averse. A 2x efficiency gain won’t matter if the software stack lags or if cloud providers hesitate to adopt the hardware.

The gap between roadmap promises and enterprise adoption📷 Published: Apr 16, 2026 at 06:19 UTC

The gap between roadmap promises and enterprise adoption

The Venice and Verano codenames hint at a broader strategy: unifying CPU and GPU roadmaps under a single platform. This could simplify procurement for data center operators, but it also raises the stakes. A misstep in one product line could drag down the others. AMD’s EPYC CPUs already power 30% of hyperscale servers, but its AI GPUs hold less than 5% of the market—a gap it’s desperate to close.

For developers, the Helios platform’s success hinges on ROCm’s maturity. NVIDIA’s CUDA has a decade-long head start, and rewriting code to target AMD hardware is a non-trivial effort. Some companies, like Hugging Face, have started optimizing for ROCm, but adoption remains patchy. The real test will be whether AMD can convince cloud giants like AWS and Azure to offer MI500 instances at scale.

The market context is brutal. Intel’s Sierra Forest and NVIDIA’s Grace Hopper are already vying for the same customers. AMD’s roadmap is ambitious, but ambition alone won’t displace entrenched players. The question isn’t whether AMD can build these chips—it’s whether it can build an ecosystem around them.

Will 2026 be the year AMD finally cracks the AI accelerator market, or will NVIDIA’s ecosystem lock-in prove too strong? The answer may depend less on teraflops and more on which company can make its hardware the easiest to deploy at scale.