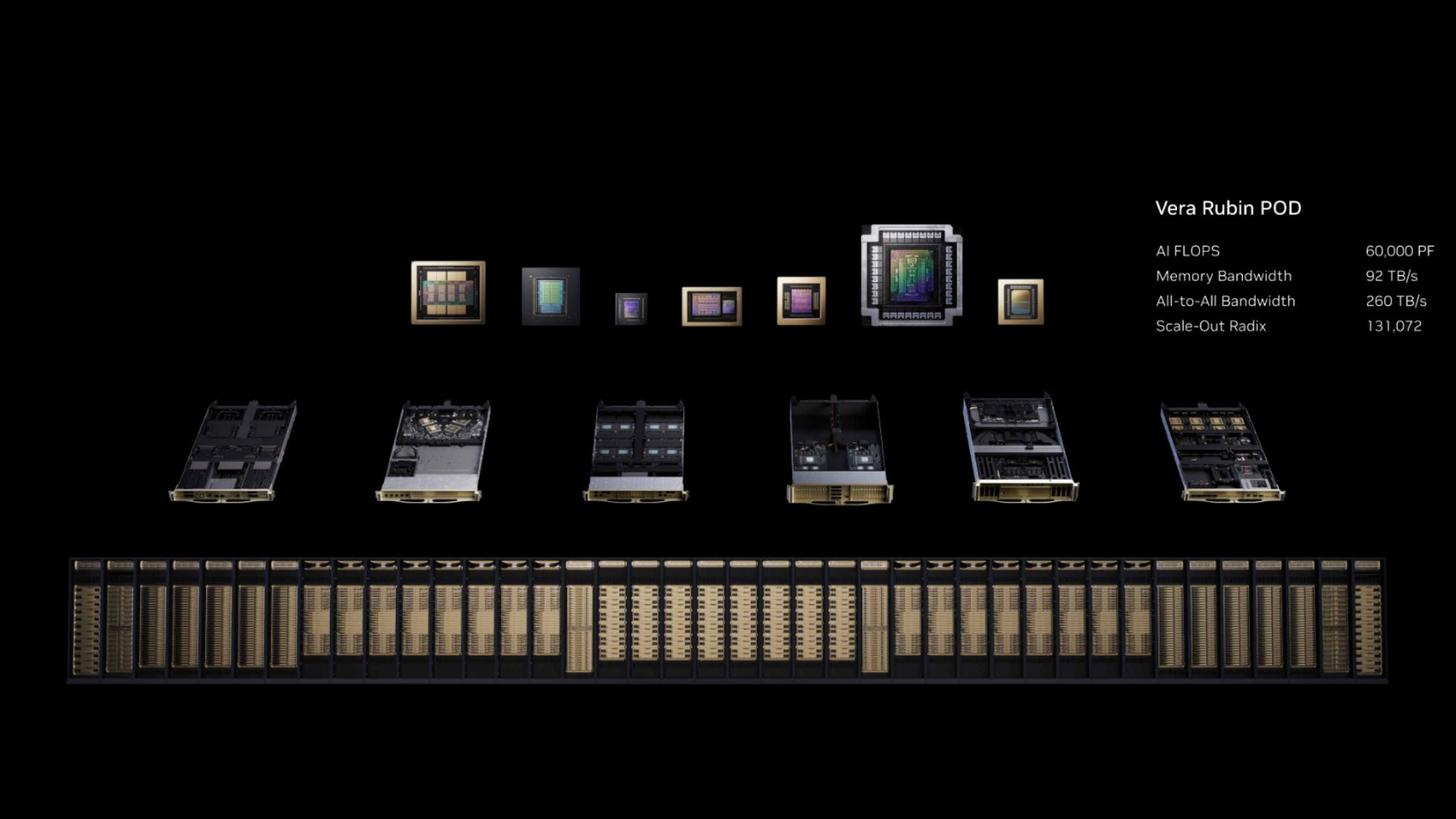

Nvidia’s Vera Rubin POD: Seven chips, 60 exaflops, and one big bet

Nvidia’s Vera Rubin POD: Seven chips, 60 exaflops, and one big bet📷 Published: Apr 18, 2026 at 18:24 UTC

- ★Nvidia ships seven Vera Rubin chips in 2026

- ★60 exaflops AI peak claims raised

- ★POD architecture tests modular scaling

Nvidia’s GTC 2026 announcement wasn’t about one star chip—it was about seven. The Vera Rubin platform, shipping in the second half of 2026, bundles seven distinct processors into a 40-rack AI supercomputer designed for exaflop-scale workloads. According to the company, the system delivers 60 exaflops of AI performance, though such figures routinely reflect peak rather than sustained real-world throughput.

The modular POD (Platform Optimized Design) architecture signals a shift toward scalable AI deployments, but it’s unclear how early adopters will navigate integration costs or cooling demands. Early community reactions on Tom’s Hardware forums highlight skepticism about scalability, comparing Vera Rubin’s promise to AMD’s Instinct and Intel’s Gaudi platforms—each with their own modular claims.

If verified, the 60 exaflop figure would position Vera Rubin as a leap beyond traditional HPC clusters, but marketing slides don’t always translate to plug-and-play performance in data centers rife with latency and power constraints. The platform’s reliance on unannounced custom chips (likely including next-gen accelerators like GB200 or GH200 variants) adds another layer of uncertainty for would-be buyers.

Modular ambition meets untested deployment claims in Nvidia’s AI factory📷 Published: Apr 18, 2026 at 18:24 UTC

Modular ambition meets untested deployment claims in Nvidia’s AI factory

Nvidia’s gamble rests on Vera Rubin’s modularity—slotting seven chips into a 40-rack chassis implies flexibility, but also complexity. Competitors like AMD and Intel have pushed similar architectures, yet none have matched Nvidia’s AI performance claims in controlled tests. The Vera Rubin name itself hints at scale: a nod to astronomer Vera Rubin’s work on dark matter, suggesting the platform targets large-scale data crunching rather than incremental improvements.

For developers, the real test will be adoption. Nvidia’s CUDA ecosystem remains dominant, but Vera Rubin’s pricing and power consumption remain undisclosed. Until real workloads run on the hardware, the 60 exaflop claim is a theoretical ceiling—one that could crumble under the weight of actual deployment challenges.

Where’s the independent verification for the 60 exaflop claim? If Nvidia’s testing was done in a vacuum, how soon will real-world latency and data bottlenecks turn those peak numbers into dust?