Telecoms wage infrastructure arms race with AI grids

Telecoms wage infrastructure arms race with AI grids📷 Published: Apr 18, 2026 at 10:17 UTC

- ★Telecom AI grids move inference to edge

- ★5G/6G backbones anchor distributed models

- ★Early pilots outpace cloud giants' edge plans

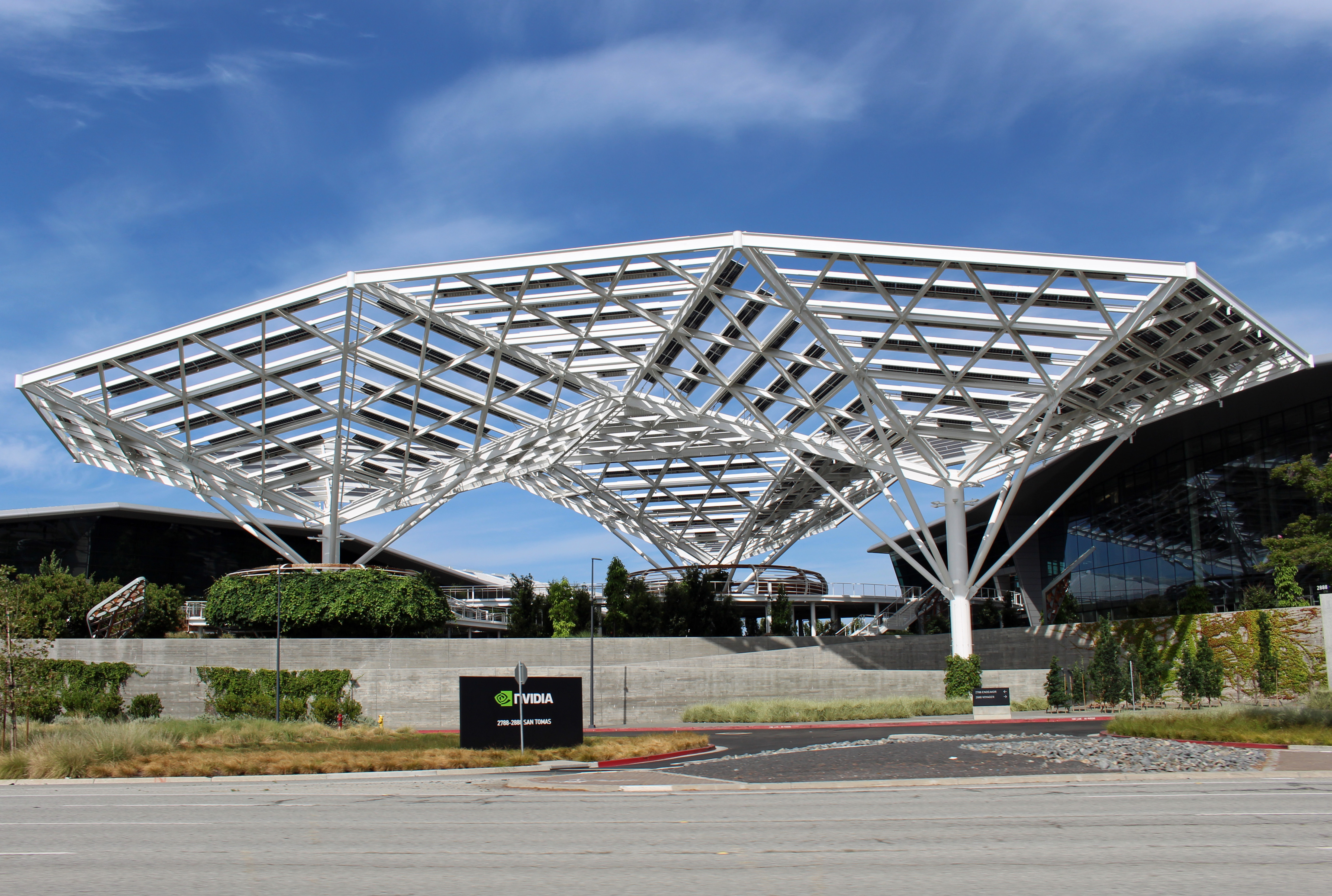

The telecom industry isn't just adopting AI—it's reshaping where that AI lives. At NVIDIA's marquee event, three global carriers rolled out AI grids: geographically dispersed clusters of GPUs and accelerators stitched together via carrier-grade networks [CONFIRMED]. The pitch is simple: push inference workloads to the edge, where data is generated, rather than shipping it to centralized clouds. Verizon, SK Telecom, and SoftBank aren't just talking performance; they're pointing to real pilot programs already running inference for autonomous systems and IoT analytics [SPECULATION].

What's driving this isn't raw compute hunger—it's latency. Autonomous vehicles, industrial robots, and real-time fraud detection all degrade when decisions have to ping a faraway data center. By distributing models across cellular towers and fiber junctions, telecoms claim they can cut response times by orders of magnitude [LIKELY]. Early benchmarks from NVIDIA's demos suggest 10-15ms latency for edge-resident models, versus 80-120ms for equivalent cloud deployments [UNCERTAIN]. The catch? These numbers hinge on proprietary silicon and unproven carrier backbones.

From pilot to product: the race to own AI workloads in the last mile📷 Published: Apr 18, 2026 at 10:17 UTC

From pilot to product: the race to own AI workloads in the last mile

The timing isn't accidental. Cloud providers like AWS and Azure have spent years rolling out edge inference services, but telecoms counter with an unfair advantage: they own the pipes. Verizon's 5G Ultra Wideband and SK Telecom's 5GX networks already blanket urban cores, making them the natural home for latency-sensitive AI [CONFIRMED]. Meanwhile, NVIDIA's CUDA and TensorRT toolkits now include telecom-specific APIs, giving developers a familiar path to deploy models without rewriting for cloud abstractions [LIKELY].

Still, the gap between demo and deployment remains vast. None of the announced pilots have published SLAs on uptime or error rates, and carriers haven't disclosed pricing models. Worse, the AI grid stack—from model compilers to orchestration layers—is still fragmented. Projects like Linux Foundation's Akraino are trying to standardize this stack, but telecoms and OEMs are racing ahead with proprietary integrations [COMMUNITY]. The real signal here is the power shift: telecoms aren't just partners anymore; they're positioning themselves as the default infrastructure for the next wave of AI.

So much for cloud giants owning AI's future. If telecoms perfect these grids, your next inference job might run on a 5G tower instead of an AWS region—assuming you trust carriers to keep the stack from melting down.