OpenAI buys Promptfoo to automate AI security—finally

OpenAI buys Promptfoo to automate AI security—finally📷 Published: Apr 21, 2026 at 18:14 UTC

- ★Promptfoo acquisition targets enterprise security gaps

- ★Automated testing for jailbreaks and prompt injections

- ★Frontier platform gets baked-in vulnerability scanning

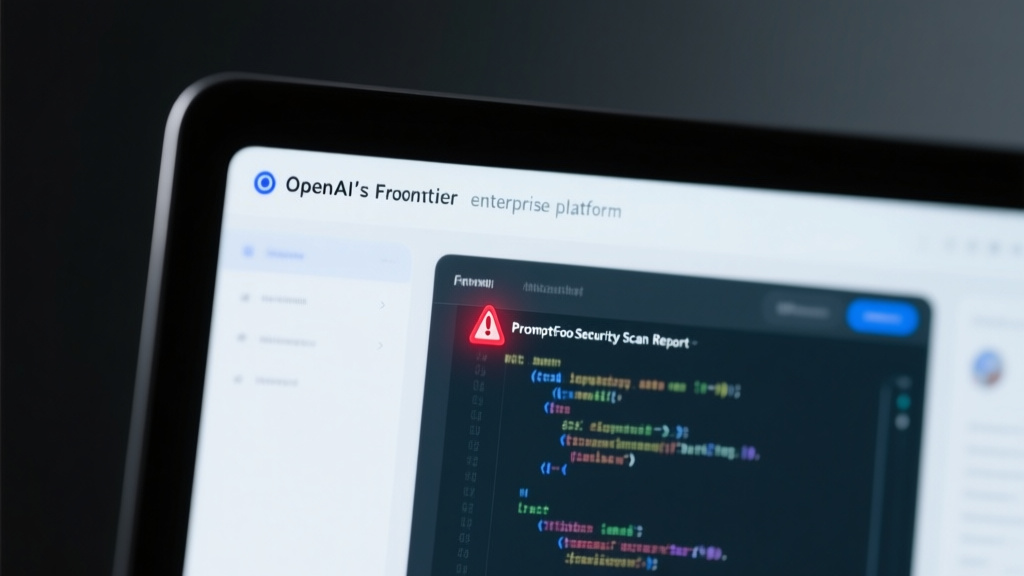

OpenAI is acquiring Promptfoo, a small but sharp AI security startup, to embed automated vulnerability testing directly into its Frontier enterprise platform. The move targets three persistent pain points: jailbreaks, prompt injections, and data leaks—all of which have plagued even the most polished large language models in production. While competitors like Anthropic and Google have rolled out red-teaming tools, OpenAI’s integration goes further by baking security checks into the deployment pipeline itself, not just the research phase.

The acquisition isn’t just about adding features; it’s a response to a growing reality: enterprises won’t adopt AI at scale without ironclad guardrails. Promptfoo’s technology, which has been used by developers to stress-test models for years, will now be a default part of OpenAI’s enterprise offering. That’s a notable shift from the company’s previous approach, which relied on third-party audits and post-hoc fixes. The question isn’t whether this will improve security—it will—but whether it’s enough to outpace the creativity of bad actors, who are already exploiting AI systems in ways researchers didn’t anticipate six months ago.

For developers, the integration could mean fewer late-night fire drills when a model suddenly starts leaking training data or generating harmful outputs. But it also signals OpenAI’s recognition that security can’t be an afterthought in AI development. The company’s Frontier Model Forum has long advocated for proactive safety measures, and this acquisition puts its money where its mouth is—at least for paying customers. The real test will be whether these automated checks can keep up with the breakneck pace of AI advancement, or if they’ll become another layer of bureaucracy that slows down innovation without stopping the next big breach.

📷 Published: Apr 21, 2026 at 18:14 UTC

The gap between AI hype and real-world safety just narrowed—slightly

The timing of the acquisition is telling. Just weeks after OpenAI’s recent leadership turmoil, the company is doubling down on enterprise trust—a critical factor for its long-term revenue. Promptfoo’s technology won’t just scan for known vulnerabilities; it’s designed to adapt as new attack vectors emerge, a necessity in an ecosystem where exploits evolve faster than patches. That’s a competitive edge over rivals like Microsoft’s Azure AI, which still relies heavily on manual red-teaming and static guardrails.

But let’s not mistake this for a silver bullet. Automated testing can catch common failures, but it’s no substitute for rigorous, human-led adversarial testing. Promptfoo’s own benchmarking data shows that even the most robust models fail unpredictably when faced with novel attack patterns. The real value here isn’t just the technology—it’s the signal that OpenAI is treating security as a first-class feature, not a checkbox.

For the broader AI industry, this move sets a new baseline. If OpenAI can make automated security testing a standard part of its platform, competitors will have to follow suit or risk being seen as negligent. That’s good news for enterprise customers, who’ve been clamoring for more transparency and control. But it also raises the stakes: as AI systems become more integrated into critical infrastructure, the cost of a single failure—whether a data leak or a manipulated output—will only grow. OpenAI’s bet is that Promptfoo’s technology can help prevent those failures before they happen, but the proof will be in the deployment, not the press release.