Google’s AI Overviews: 90% accuracy, 100% of the problems

Google’s AI Overviews: 90% accuracy, 100% of the problems📷 Published: Apr 7, 2026 at 18:31 UTC

- ★90% accuracy framed as a failure threshold, not a success

- ★“Millions of lies per hour” extrapolated from test-case errors

- ★Speed-over-truth tradeoff baked into generative search design

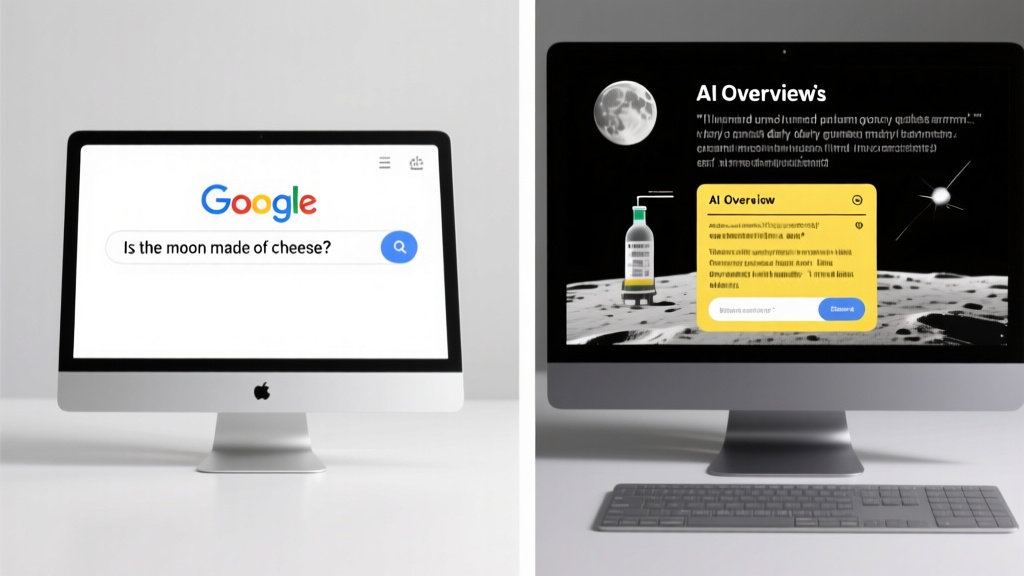

Google’s AI Overviews—a cornerstone of its Search Generative Experience—now faces an inconvenient arithmetic problem. If Ars Technica’s testing is even directionally correct, the feature’s error rate translates to millions of fabricated responses hourly when scaled to Google’s 8.5 billion daily queries. That’s not a bug; it’s a design consequence of prioritizing latency and fluency over verifiable truth.

The 90% accuracy figure, implied as Google’s internal benchmark, reads like a confession. In most engineering disciplines, 90% reliability is a red flag—especially when the 10% failure mode involves hallucinated medical advice, invented product specs, or legal fibs. Yet here, it’s positioned as an acceptable tradeoff for convenience. The real question isn’t whether the math checks out, but whether users were ever asked to sign off on this risk-reward calculation.

Early signals suggest the inaccuracies aren’t edge cases but systemic. Unlike traditional search, which surfaces existing content (flaws and all), AI Overviews generates answers—meaning errors aren’t just misranked links but entirely new falsehoods. The feature’s May 2024 rollout framed this as ‘helpful context.’ In practice, it’s a high-stakes bet that users will tolerate fabricated details for the sake of a smoother interface.

The benchmark no one asked for: when ‘mostly correct’ becomes a liability at scale📷 Published: Apr 7, 2026 at 18:31 UTC

The benchmark no one asked for: when ‘mostly correct’ becomes a liability at scale

The deployment friction isn’t technical—it’s philosophical. Google’s search dominance was built on indexing the web, not inventing it. AI Overviews flips that script, asking users to trust an opaque system that, by design, ‘hallucinates’ when uncertain. The community response among developers and researchers hasn’t been surprise, but resignation: this was always the likely outcome when generative AI met web-scale queries.

Hardware and latency constraints compound the problem. Real-time fact-checking at Google’s scale would require either prohibitive compute overhead or a radical slowdown in response times—neither aligned with the product’s ‘instant answers’ branding. The SGE’s architecture (built on PaLM 2) wasn’t designed for forensic accuracy; it was optimized for plausible outputs. That’s a feature in a demo, but a flaw in deployment.

The real bottleneck isn’t the AI’s capability—it’s the assumption that ‘good enough’ is good enough. For queries about recipe substitutions, maybe. For health, finance, or civic information? The 10% failure rate isn’t a rounding error. It’s a liability waiting for a class-action lawsuit.