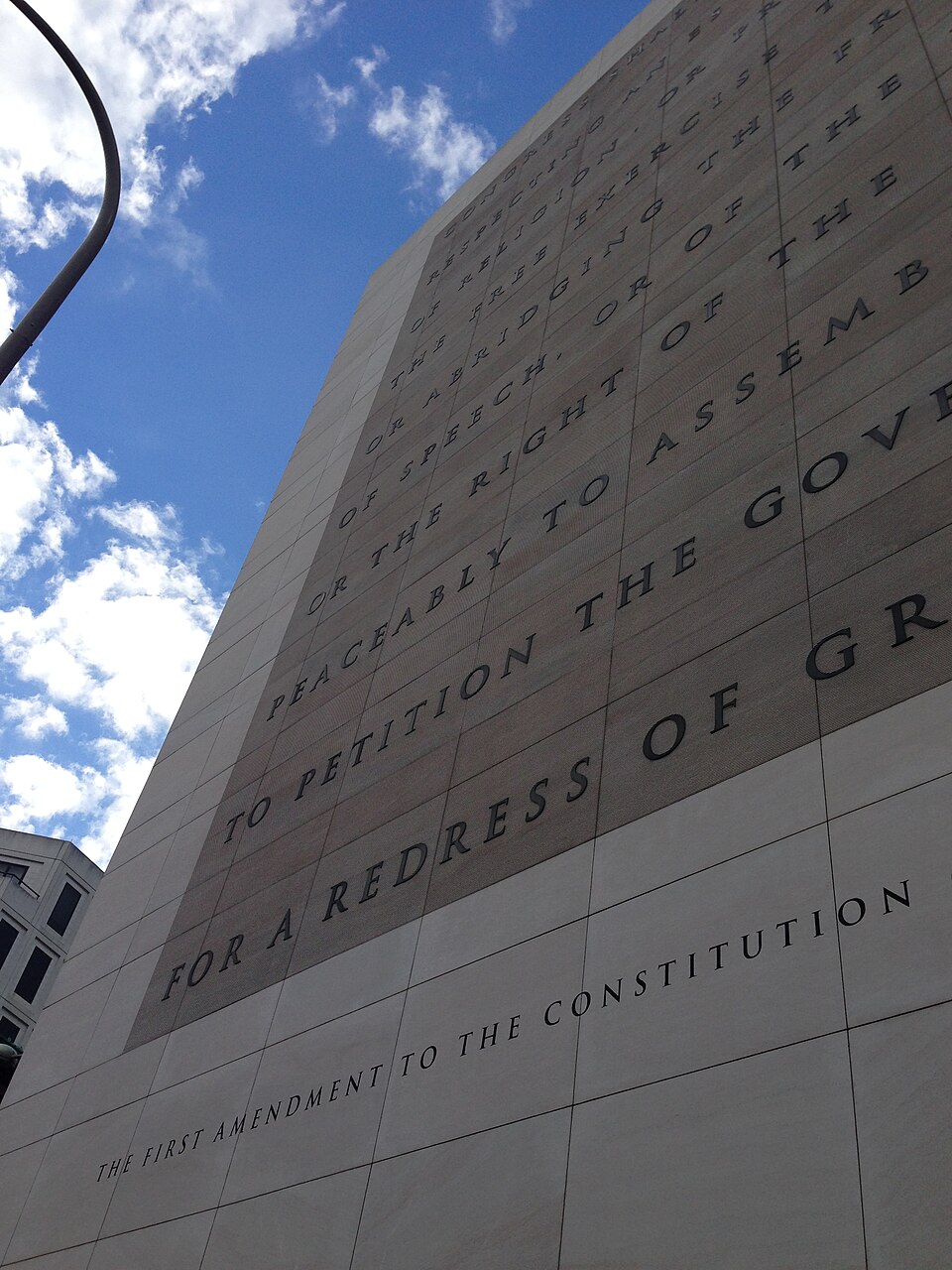

First Amendment vs. Federal Overreach: Anthropic’s Uphill Battle

First Amendment vs. Federal Overreach: Anthropic’s Uphill Battle📷 Source: Web

- ★Anthropic sues Defense Department

- ★First Amendment at stake in AI case

- ★Government defendants accused of excess

The phrase 'making a federal case out of something' usually carries a whiff of exaggeration. Not this time. Anthropic, the AI startup behind Claude, has found itself in a legal showdown with the Department of Defense and other government defendants, arguing that their actions crossed a First Amendment red line. The case, as outlined in Techdirt’s reporting, centers on allegations that federal actors went beyond reasonable oversight—targeting Anthropic in a way that could chill innovation and free expression in AI development.

This isn’t just another regulatory skirmish. The First Amendment implications here are sharp: if government agencies can unilaterally dictate terms to AI companies under vague or pretextual justifications, the precedent could ripple far beyond Silicon Valley. The fact that Trump’s name surfaces in the snippet—however unclear his direct role—adds a layer of political noise, but the core tension remains technical and legal. What’s actually at stake isn’t just Anthropic’s bottom line, but the broader question of how much latitude federal agencies have to intervene in emerging tech.

For all the hype around AI’s transformative potential, cases like this remind us that the real bottlenecks aren’t just computational—they’re legal, political, and often invisible until they’re weaponized. The Defense Department’s involvement suggests this isn’t merely an administrative dispute. It’s a test of whether federal power can be wielded with precision, or whether it’s just another blunt instrument in the toolbox of bureaucratic overreach.

First Amendment vs. Federal Overreach: Anthropic’s Uphill Battle📷 Source: Web

The gap between legal principle and federal heavy-handedness

The developer community isn’t waiting for a verdict to react. Early signals from technical forums and GitHub discussions suggest unease about the case’s implications for open-source AI projects. If federal agencies can target Anthropic under these circumstances, smaller teams or independent researchers might think twice before pushing boundaries—especially in areas deemed 'sensitive' by the government. This chilling effect isn’t hypothetical; it’s already a recurring theme in tech policy debates, from encryption to drone regulation.

The competitive angle here is just as telling. Anthropic’s rise has been driven by its commitment to safety and transparency, a contrast to some of its rivals’ more opaque approaches. If the government’s actions tilt the playing field—even unintentionally—it could advantage incumbents with deeper legal pockets, or those more willing to comply with federal demands. That’s not just a market distortion; it’s a potential innovation tax.

So far, the courts haven’t weighed in on the specifics of Anthropic’s claims. But the mere fact that this case exists raises a pointed question: when does federal oversight become federal overreach? The answer won’t just shape Anthropic’s future. It could redefine the boundaries of what AI companies—and their users—are allowed to build, discuss, or even experiment with. That’s a reality gap no benchmark can measure.

For all the noise about AGI and 'agentic futures,' the most immediate threat to AI innovation might not be technical limits, but legal ones. And unlike a failed model checkpoint, regulatory overreach doesn’t come with a rollback button.